Fundamental Rights Impact Assessments (FRIAs) are a new and evolving tool. They can be a lever for civil society to ensure technology serves people and protects rights. By understanding the FRIA framework, pursuing FRIA information, and using it in advocacy and legal strategies, we can push for AI that respects and uplifts human rights. This module explains what FRIAs are, who must do them, how to access FRIA information, and how civil society can use FRIAs for accountability and change.

By the end of this learning package, you will:

- Understand what a FRIA is and how it differs from other impact assessments;

- Identify which actors must perform FRIAs under the EU AI Act and in which scenarios;

- Spot key elements of a strong vs. weak FRIA using simple criteria;

- Identify ways your organisation can use FRIAs in advocacy, campaigns and legal strategies;

- Know where to look for FRIA-related information.

The EU’s Artificial Intelligence Act creates new obligations for deployers of “high-risk” AI systems. Public authorities and some companies are mandated to consider fundamental rights impacts before rolling out high-risk AI.

A FRIA is not just a bureaucratic checklist. When done properly, it can prevent harms before they happen, as well as make it possible to hold AI deployers accountable for rights violations after the system is put into use.

Reflect on these questions:

- Why might FRIAs be important, or not, for people impacted by an AI system?

- What elements do you think are key to an effective FRIA?

- How could FRIA information bolster your advocacy?

In simple terms, a FRIA is a pre-deployment check that asks which rights might be impacted, who could be harmed, and what can be done to prevent or mitigate harm before deployment.

How is a FRIA different from other assessments?

- Data Protection Impact Assessment (DPIA): A DPIA (required under the GDPR for certain data processing) in practice tends to focus on privacy and data protection risks. A FRIA is broader, covering all fundamental rights (equality, dignity, freedom, etc.) and does not only consider risks resulting from personal data processing. Where a DPIA is also required, the FRIA should complement it by focusing on the wider rights impacts rather than duplicating the privacy analysis.

- Human Rights Impact Assessment (HRIA): HRIAs are used in various sectors to evaluate and address human rights impacts of projects or policies. FRIAs refer to “fundamental”, rather than “human” rights only because this is the language used by the EU and referred to in the AI Act. FRIAs remain conceptually linked with human rights due diligence principles, e.g. those found in the United Nations Guiding Principles on business and human rights (UNGPs).

Assessing AI’s fundamental rights impacts evolved from earlier practices and growing recognition of AI’s societal risks:

- Business and human rights movement: HRIAs have become part of corporate responsibility to respect human rights. International standards, such as the UNGP, expect organisations to identify, assess, and mitigate actual or potential human rights harms from products, services, or operations. This due diligence logic underpins the FRIA concept in the AI Act.

- Existing impact assessments in law: The EU has introduced assessment duties in related domains, including DPIAs under the GDPR. More recently, the Digital Services Act (DSA) requires large platforms to assess “systemic risks” affecting rights and society.

- From ethics to enforcement: For years, AI governance relied heavily on voluntary ethical AI principles. These helped set expectations but often lacked accountability. High-profile cases of rights-invasive AI contributed to calls for binding, risk-based regulation.

Under the AI Act, FRIAs are mandatory for certain deployers of high-risk systems. You can find more information on what falls under “high-risk” in ECNL’s EU AI Act Learning Center module. Those who must conduct a FRIA before deployment are:

- Public authorities using high-risk AI systems, for example a municipality uses AI to allocate benefits;

- Companies that provide public services, for example, a private hospital uses a high-risk AI system to prioritise patients for treatment, so it must conduct a FRIA before first use;

- Banks and insurance companies using AI for credit scoring and assessment of insurance premiums.

The obligation applies before the first use of the system. Companies that develop the systems have their own risk assessment obligations under the AI Act The role of the FRIA is to ensure full protection of rights in the entire AI system lifecycle and consideration of impacts specific to the context of use of the AI system.

While the EU AI Act makes FRIAs mandatory for certain deployments of high-risk AI system, organisations can also use FRIA-style assessments voluntarily for lower-risk uses. In the Netherlands, the government is pursuing a broader approach to algorithm governance and transparency through tools such as the public Algorithm Register, which is currently voluntary but is intended to become mandatory for impactful algorithms.

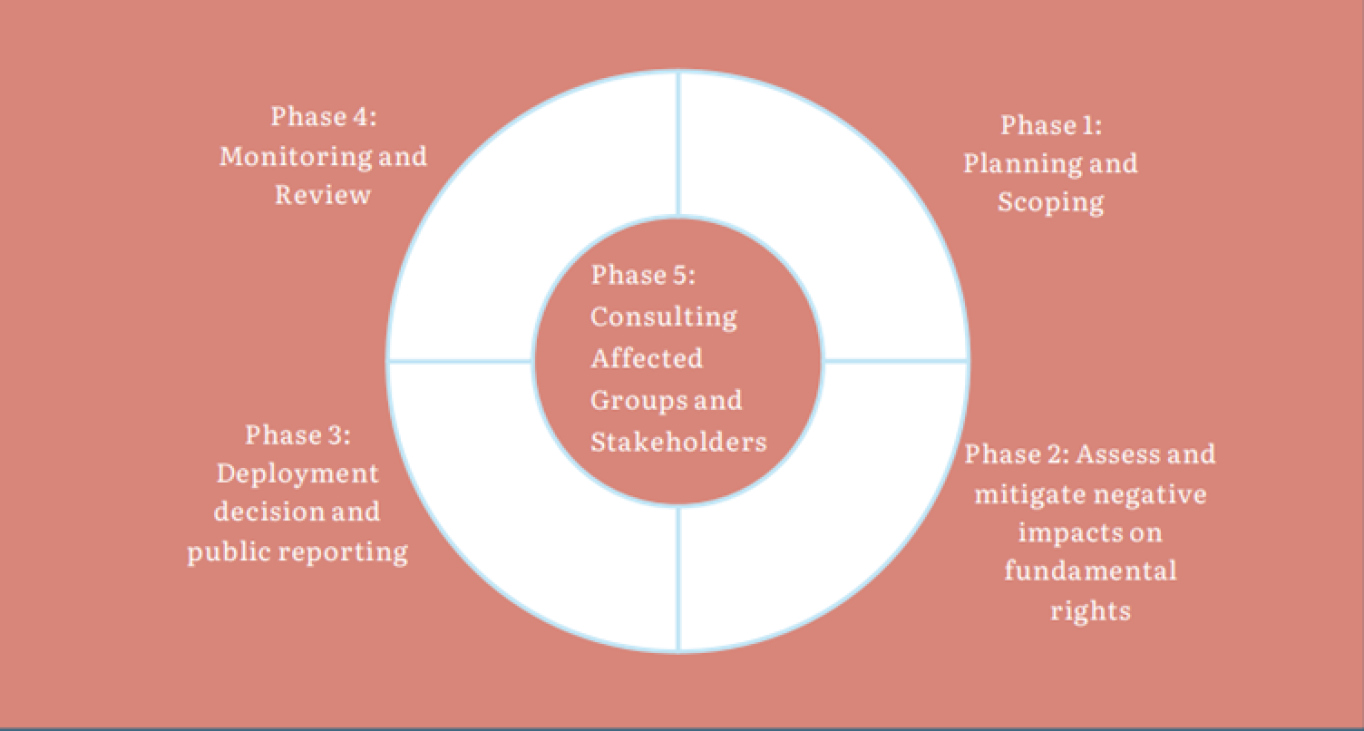

ECNL and the Danish Institute for Human Rights developed a model for a meaningful FRIA, consisting of 5 key phases:

Phase 1. Planning and scoping: Decide when the FRIA will take place, who will conduct it and who will be consulted, what resources are needed, and which AI system and use context are being assessed.

Phase 2. Assessing and mitigating impacts: Identify rights risks using scenarios, assess severity and likelihood, and define mitigation measures.

Phase 3. Deployment decision and public reporting: Decide whether to deploy the system, considering remaining risks and proportionality. Publish a summary of FRIA findings in line with transparency obligations.

Phase 4. Monitoring and review: Monitor impacts after deployment, check if mitigations work, and update the FRIA when circumstances change.

Phase 5.Consultation with affected groups (cross-cutting): Engage affected individuals or their representatives, experts and civil society throughout the process to validate assumptions and improve mitigation.

The quality of impact assessments often leaves a lot to be desired. How can you recognise a good and a bad FRIA?

Signs of a Strong FRIA

- Detailed system description: Clear purpose, context, key technical details and decisions influenced.

- Clear identification of rights and affected groups: Names all likely impacted rights and groups, supported by evidence or realistic examples

- Meaningful stakeholder participation: Notes input from CSOs, affected communities, and/or independent experts, not only internal actors, and discusses how this feedback was implemented.

- Concrete mitigation measures: Specific safeguards to minimise impact with responsibilities, resources, and follow-up assigned, ideally time-bound and verifiable.

- Built into decision-making: Shows how FRIA results shaped the deployment decision, including the option to delay or not deploy the system if risks cannot be mitigated.

- Consideration of alternatives: Discusses less intrusive options, including “no AI”, and explains the choice.

- Monitoring plan: Explains how impacts will be monitored and when the FRIA will be reviewed or updated.

- Transparent summary: Plain-language summary that covers findings, mitigations, and how concerns can be raised.

Signs of a Weak FRIA

- Vague/Superficial conclusions without analysis: “Minimal risk” or “we comply with law” with no evidence.

- Only privacy-focused: Treats FRIA like a DPIA, ignores discrimination, freedom of expression, social and economic impacts, and other fundamental rights not tied to privacy.

- No (meaningful) consultation: No engagement with affected groups or only token input with no influence.

- Superficial mitigations: Generic measures (training, awareness) without meaningful procedural or technical safeguards, or relying only on provider assurances.

- No alternatives considered: Reads like a justification for a decision already made.

- Weak transparency: Confirms a FRIA happened but discloses little about risks, affected groups, or mitigations, and relies on broad confidentiality claims without substantive summaries or redactions.

- No follow-up plan: Unsatisfactory/no monitoring, review, or update mechanism.

In one sentence: A strong FRIA is informative and proactive, a weak FRIA is obscure and superficial.

Access to information and awareness

FRIAs are intended to demonstrate the expected impact of a high-risk system. They outline how the deployer assessed risks and what mitigations they included, helping you spot and monitor weak safeguards or discrepancies in their application in practice Say, for instance, if the deployer ignored crucial risks or if the indicated mitigations are non-existent or not effective. FRIA information also provides tangible evidence which you can use to support your organisations’ narratives and campaign messages.

Accountability

FRIAs can provide you with evidence of weak human rights due diligence, weak follow-through, low transparency, or no real stakeholder input, thereby serving as a crucial tool for keeping the use of AI systems to account. Based on information included in FRIAs, you can, for example, submit complaints to the Market Surveillance Authority or take other legal action, for instance, in national courts or before national human rights institutions. You can also use FRIAs to build evidence and arguments for regulators and policymakers to act upon (for example, to prohibit certain systems).

Accountability in Practice

- Engage with deployers based on findings: Use FRIA content to interrogate assumptions (who is affected, what risks were considered, what evidence supports likelihood/severity), contest insufficient mitigations, and press for concrete changes. Such changes could include additional safeguards, monitoring commitments, or a pause on deployment until risks are addressed. This forces the deployer to respond to identified gaps, and creates a documented record of concerns and the deployer’s follow-through.

- Use in Complaints and Strategic Litigation: FRIA documentation can strengthen legal strategies that a deployer either failed to conduct a required FRIA, failed to account for relevant risks, or adopted mitigations that are not effective for the use context. This can support arguments that the system should not be used as deployed, and can complement accountability routes, including the right to lodge a complaint with an MSA or in a national court.

- Escalate to Market Surveillance and Oversight Bodies: Where FRIA findings suggest non-compliance or serious risk, they can support a complaint to a Market Surveillance Authority who can act on complaints. This could trigger scrutiny from supervisory bodies. Institutions protecting fundamental rights in your country (such as equality bodies or national human rights institutions) also may seek access to AI Act documentation when necessary for their mandates.

Practical recommendation for civil society: Submit a short, evidence-led brief that pinpoints which rights and groups are affected, which FRIA elements appear missing or weak, and what action you recommend (for example, an inquiry or a request for additional documentation, independent testing via the competent authority/MSA route).

Public pressure and campaigns

FRIA findings can also be used to bolster campaigns by turning deployers’ claims on impacts into tangible evidence of fundamental rights violations. Because FRIAs are meant to feed transparency and oversight over use of high-risk AI, information that they reveal can support media work, coalition mobilisation, and clear campaign asks, like improving mitigations, or potentially pausing deployment until risks are properly addressed, ideally with input from affected groups.

EU AI Registry

The AI Act establishes an EU database of high-risk AI systems. It is intended as a public registry where information about high-risk AI systems placed on the EU market or put into service is recorded, with submissions by providers and deployers.

What information will be available?

The AI Act sets out information that should be published both by developers of high-risk AI systems and by deployers. Importantly, public sector deployers must publish the summary of the results of the FRIA, linked to the AI system entry, alongside deployer identity and contact details. Note that this obligation only applies to public authorities, meaning that private companies required to conduct an impact assessment (banks and insurance companies) do not have to publish the results, which makes public scrutiny more difficult.

Public or not?

Most of the database is intended to be public and user friendly. However, high-risk AI systems used in the areas of law enforcement, migration, asylum, and border control, will only be available in a non-public part of the database, accessible only to the Commission and competent authorities. This significantly limits public oversight and accountability of AI used by the police or border agencies.

Why does this matter for CSOs?

The database is a key mechanism under the AI Act to help you identify what systems exist, who deploys them and in what context, and whether FRIA summaries are available. Where FRIA information is available, it provides insight into how risks to rights were identified and addressed, allowing civil society watchdogs to learn more about the system and potentially challenge the findings of the impact assessment.

Once the database is live, you can monitor systems in your country or sector, and track FRIA summaries over time. This can be the springboard for further inquiries.

National registries

Alongside the AI Act’s EU database for high-risk AI systems, some countries also maintain national public sector algorithm registries. For example, the Netherlands Algoritmeregister provides public information about algorithms used by public authorities, and covers a broader set of systems than the EU database, including lower-risk and simpler tools. Because the EU database is relatively narrow in scope, CSOs can point to national examples such as the Dutch registry to advocate for more ambitious national transparency mechanisms, particularly for public sector algorithms.

Freedom of Information Requests

Sometimes FRIA information is not readily accessible, for example the EU summary is too high-level, the system is used by law enforcement or a border authority and therefore not publicly available through the database, or you need the full FRIA report.

Freedom of Information (FOI) or access-to-documents laws can help. Most European countries allow requests for documents held by public authorities, subject to exceptions. At EU level, there is also a right to access documents.

Complete FRIA information is available to deployers and competent authorities who need to be notified of the results. In practice, it might be more effective to FOI the deployer directly. If this is unsuccessful, you can try to seek information from a supervisory authority or from fundamental rights authorities but note that they are bound by the confidentiality requirements of Art. 78 of the AI Act.

Four steps to get FRIA Information

- Identify the system and responsible entities: Use the EU database and other sources (e.g. media reports, government documents) to identify deployers and whether a FRIA summary exists.

- Request documentation: Use Freedom of Information laws to request the deployer or, where relevant, the competent authority, provide FRIA reports and/or related records such as minutes, internal guidance, or procurement materials.

- Follow up on refusals: Request partial access, refine requests, and use appeal mechanisms.

- Escalate and publicise: Use legal appeal, oversight bodies, or media pressure if transparency is still blocked.

If your request is refused, the right to appeal is broadly applicable in various countries in various forms (administrative appeal, information commissioner, ombudspeople, courts). In some cases, involving journalists can increase pressure and transparency.

- For a comparative overview of national Freedom of Information laws (scope, timelines, exceptions, appeals), consult the Global Right to Information (RTI) Rating.

- For practical, country-specific filing tips and request strategies across multiple jurisdictions, see Access Info’s Legal Leaks Toolkit.

- For accessing EU documents, as well as drafting a FOIA, read Access Info’s guidance.

The FRIA process is only meaningful if people affected by the planned system, or their representatives, were consulted and their input influenced the decisions of the deployer. Although the AI Act does not mandate participation, but only encourages it in Recital 96, civil society should demand to be consulted, arguing that without their inputs the deployer cannot accurately assess all potential impacts.

As a practical example, in welfare eligibility or fraud detection systems, migrant organisations can highlight practical exclusion risks, such as language barriers, documentation mismatches, informal housing situations, or biased proxies that increase false positives for certain communities, which leads to wrongful denials, stigma, or investigations.

Why participation matters

Meaningful participation in a FRIA can achieve substantive, procedural, and strategic outcomes, beyond what technical or desk-based assessments can deliver. This is useful for affected people but also CSOs and deployers to prevent harm and reduce blind spots in mitigation.

- Better risk identification and mitigation: Contribution from civil society and affected people helps spot real world harms and check if the planned safeguards will reduce them

- Better decision-making: Participation improves decision-making by testing assumptions against real-world experience, helping the deployer choose proportionate safeguards, narrow the use case, or halt deployment.

- Better accountability and governance: Participation creates a clear record of concerns and responses, which strengthens oversight and makes it easier to challenge weak practice.

Taken together, participation shifts the FRIA from a compliance exercise to a preventive governance tool.

What meaningful participation looks like

In ECNL’s Framework for Meaningful Engagement 2.0, we argue that meaningful participation is based on three principles: creating a shared purpose, running an inclusive and transparent process people can trust, and showing visible impact by acting on input and reporting back on what changed. Entry points for participation:

- Before the assessment: scoping, prioritising rights and groups, shaping questions.

- During the assessment: interviews, focus groups, workshops, surveys, written submissions.

- During mitigation design: testing feasibility and sufficiency of safeguards.

- After completion: monitoring, review, and FRIA updates when the system changes.

Reflect on what you have learnt:

- “Where is the FRIA?” Think of an AI system used, or proposed, in your community that worries you. Will you ask this question next time it is discussed, and what is your most effective route to get an answer?

- In your work, which issue could benefit most immediately from requesting or analysing a FRIA?

- Who in your network could help you act (legal/FOI support, technical expertise, community testimony, organisers)?

- How will you make FRIA monitoring routine (database checks, alerts, or an annual review)?

Ioana Tuta (The Danish Institute for Human Rights) says:

"A FRIA is a critical prevention and accountability tool for AI-enabled human rights harms – but, indeed, its potential is very much dependent on certain implementation choices. My top elements for any organisation to consider when examining a FRIA would be

- Whether deployers have a robust governance framework and assigned top level responsibility and accountability for the FRIA,

- Whether the FRIA team counts with human rights competences and has been given a mandate to critically deliberate about the human rights impacts, including to potentially advise against the deployment of the AI system,

- Whether deployers have conducted meaningful stakeholder engagement, including with potentially affected people."

- ECNL Learning Center package on the EU AI Act (for legal context)

- ECNL Learning Center Introduction: AI systems and human rights (for basic concepts)

- A Guide to Fundamental Rights Impact Assessments (for practitioners)

- Framework for Meaningful Engagement 2.0